CoreWeave Is Not a Cloud Provider. It Is a Compute Factory.

Dedicated AI Factory Operators Defined, Why Hyperscalers Cannot Fill the Gap, Offtake Structure as the New Business Model, What the Next Operator Category Looks Like

Welcome to Global Data Center Hub. Join investors, operators, and innovators reading to stay ahead of the latest trends in the data center sector in developed and emerging markets globally.

The Warehouse in New Jersey

In 2017, three former commodity traders leased warehouse space in New Jersey and filled it with NVIDIA graphics cards.

Mike Intrator, Brian Venturo, and Nikhil Bhatt had spent their careers trading energy and risk.

They applied the same analytical framework to cryptocurrency mining that they had applied to commodity markets.

Find the asset with the best risk-adjusted return, secure the infrastructure to extract it, and scale.

The infrastructure they built for Ethereum mining was highly precise.

Proof-of-work systems mirror deep learning, requiring dense GPUs, specialized power distribution, and thermal management designed for intense heat loads.

The three founders built for the workload, not for the appearance of a data center.

Then Ethereum moved to proof-of-stake. The mining business became unviable. The infrastructure remained.

What they had built without designing it as such was purpose-engineered compute infrastructure for GPU-dense workloads.

The pivot to AI compute in 2019 was a recognition of what they already had.

CoreWeave was a compute factory before it had a name for what it was.

What the Prior Five Articles Built

This series has assembled one analytical framework across five historical inflection points. Each article introduced one variable. This one assembles them in full.

IBM Built the Factory. The Market Built a Different One established the foundational pattern. Every compute era produces a dominant factory model optimized for the workload of its moment. When the workload outgrows the factory, a new one gets built by operators who recognize the mismatch early. The transition premium goes to the capital that positions ahead of it.

Why AWS EC2 Rewrote the Economics of Compute Ownership introduced the ownership variable. EC2 transferred factory ownership from tens of thousands of enterprises to a small number of hyperscale operators. The distributed returns of the client-server era consolidated into a concentrated ownership structure that has compounded for two decades.

Open Compute Project: The Hardware Moat Facebook Built and Gave Away introduced the design efficiency variable. Two factories at identical capacity produce different returns when one was engineered at the component level for its workload. The design gap is structural. Operational improvement cannot close it.

Two GPUs: The Infrastructure Shift That Priced Most Investors Out introduced the workload compatibility variable. A factory engineered for one workload does not automatically qualify for the next. AlexNet identified the shift in 2012. The infrastructure capital market took five years to price it.

The Retrofit Problem: Why Legacy Data Centers Cannot Serve AI Workloads named the consequence. Most of the global installed base cannot serve GPU-dense AI training workloads. The retrofit numbers do not work. The asset class that reads strong on conventional metrics is disqualified at the procurement specification level.

CoreWeave is the answer to the question all five articles were building toward. When the installed base cannot become the new factory, who builds it?

What the Pivot Revealed

The 2019 pivot from cryptocurrency mining to AI compute infrastructure was not a simple repurposing.

Intrator, Venturo, and Bhatt discovered that the infrastructure they had built for Ethereum mining had produced the specifications the AI training market needed and could not find elsewhere.

GPU density: The mining operation had been optimized for maximum GPU density per square foot precisely the configuration AI training workloads require. The thermal architecture for that density was already in place. The power distribution to supply it was already engineered.

Offtake discipline: The commodity trading background had trained all three founders to think in production economics: build the factory only when you have committed buyers for the output. CoreWeave’s early growth was structured around committed customer contracts. The factory was built for a buyer, not built and then marketed.

Cost basis: The mining operation had been optimized relentlessly for energy cost per unit of compute output. That discipline transferred directly to AI compute economics. CoreWeave’s early infrastructure carried a cost basis the general-purpose cloud operators optimized for a different workload profile could not match for GPU-dense training at scale.

These three characteristics (density, offtake discipline, cost basis) are the operational DNA of a compute factory.

The commodity trading background that looked like an outsider’s disadvantage was the structural advantage.

The Compute Factory Assembled in Full

This is the definition the series has been building toward.

A compute factory is a facility purpose-built for a specific workload, optimized at the input level for that workload’s binding constraints, owned by an operator whose entire business model is structured around producing that workload’s output at scale.

It has a specific input cost structure power, silicon, cooling, interconnect. It has a specific production process the architecture that converts those inputs into compute output at the lowest possible unit cost.

It has a specific output. And it has an owner whose returns depend entirely on the efficiency with which inputs are converted to that output.

The mainframe was a compute factory. IBM owned every layer. IBM Built the Factory. The Market Built a Different One. traced what happened when the workload changed and the factory did not.

The hyperscale campus is a compute factory. Amazon, Google, and Microsoft own the infrastructure at scale and sell access by the unit. Why AWS EC2 Rewrote the Economics of Compute Ownership traced what happened when the ownership model changed and most capital missed the transfer.

CoreWeave is a compute factory.

Purpose-built for GPU-dense AI training, optimized from the component level for that workload’s thermal and power requirements, structured around committed offtake rather than speculative demand.

It is the answer to the question AlexNet raised in 2012 and The Retrofit Problem confirmed: when the workload outgrows the installed base, who builds the replacement?

CoreWeave is a different operator from AWS. Lambda is a different operator from Google Cloud.

They are the first generation of dedicated AI compute factories a category that did not exist in any prior infrastructure cycle because the workload that created them does not resemble any prior workload.

The Market’s Misreading

The infrastructure capital market has applied the wrong analytical frame to the dedicated AI compute operators.

The default classification neocloud, specialized cloud, GPU cloud positions CoreWeave as a segment of the cloud market.

It belongs in a different market entirely.

Cloud providers sell general-purpose compute access across a broad workload profile to a broad customer base.

The economic model depends on utilization diversity — different customers using different resources at different times, averaging toward efficient aggregate utilization.

CoreWeave serves one workload category from infrastructure purpose-built for it.

The economic model depends on committed offtake customers who have contracted for GPU capacity in advance, at volumes that justify the capital expenditure required to build the factory to serve them.

The Microsoft commitment that structured CoreWeave’s early growth was an industrial offtake agreement. The same structure justifies factory construction in every industrial sector where output must be committed before the factory is built.

The distinction matters for capital allocation. A cloud provider is valued on revenue multiples calibrated to broad customer diversification, flexible demand, and commodity-pricing risk.

A compute factory is valued on the economics of its committed offtake contract duration, counterparty quality, power cost basis, and the defensibility of its infrastructure specifications against the next workload generation.

Most valuation frameworks applied to CoreWeave at its IPO in March 2025 were cloud frameworks. The right framework was industrial.

Three Positions on the New Operator Category

For independent operators evaluating the dedicated AI compute category, CoreWeave’s trajectory identifies the specifications required to compete. GPU density above 40 kilowatts per rack. Liquid cooling integrated from construction. Power cost basis negotiated at the grid interconnection level.

Committed offtake from a customer whose workload requires dedicated capacity. An operator who cannot meet all four specifications is a data center operator with GPU inventory.

For infrastructure investors, the dedicated AI compute category requires a valuation methodology the cloud comparable set cannot provide. The relevant comparables are industrial operators with long-term offtake contracts energy infrastructure, fiber networks, industrial logistics.

The metrics that matter are contract duration, power cost per kilowatt-hour, PUE at AI-workload density, and the technical qualification rate against hyperscaler procurement specifications.

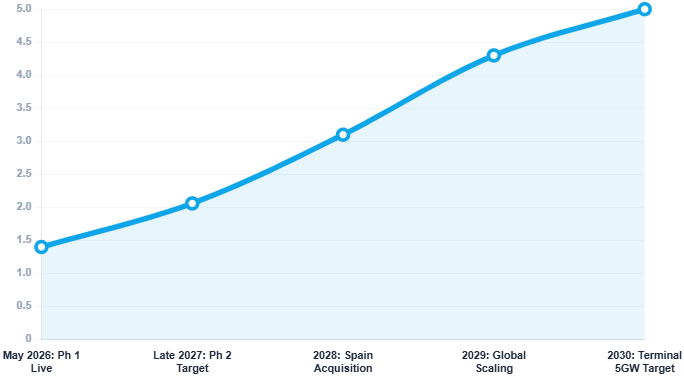

For public equity investors, CoreWeave’s IPO in March 2025 at a $23 billion valuation created the first public market benchmark for the dedicated compute factory category.

The valuation debate that surrounded the IPO cloud multiple or infrastructure multiple, growth story or cash flow story reflected the absence of a settled analytical framework for a category that did not exist two years before.

The compute factory framework this series has built resolves that debate. CoreWeave is an industrial operator. Its valuation belongs in the industrial comps, adjusted for the growth rate of the workload it serves.

The Arc Completes and Opens

This series opened with a financial services firm in the early 1980s building the first server cluster to replace the mainframe. The pattern has run without interruption since. IBM’s factory was bypassed by the client-server cluster.

The client-server cluster was bypassed by EC2. EC2 was not bypassed but the factory it created became misaligned with the AI training workload AlexNet identified in 2012. The response to that misalignment is CoreWeave.

In 2025, the binding constraint of the compute factory era is committed grid power at gigawatt scale. Capital can procure GPU clusters. Capital can acquire land. Capital cannot skip a power interconnection queue or compress a grid upgrade on a timeline matching deployment pressure.

The operators who have secured large-scale power commitments and built the thermal and interconnect architecture to convert that power into GPU-dense compute output own the factory the intelligence economy requires.

Every prior factory was built to serve the markets that could pay for it first.

The intelligence economy’s most consequential output may be what it enables for markets that never had compute access at scale countries that missed the mainframe era, missed the cloud era, and now see compute capacity as a core infrastructure imperative for the next thirty years.

The next compute factory will be built in markets this series has not yet covered.

The capital that positions ahead of that development will find a transition premium the current infrastructure era cannot yet see. That is where this analysis goes next.