Why Is Meta Spending $21 Billion on CoreWeave Instead of Its Own US Data Centers?

Take-or-pay GPU supply mechanics, NVIDIA Vera Rubin priority access, US grid constraints, hyperscaler self-build timelines, inference workload economics, neocloud capital structure.

Welcome to Global Data Center Hub. Join investors, operators, and innovators reading to stay ahead of the latest trends in the data center sector in developed and emerging markets globally.

How the Deal Is Structured

On April 9, 2026, CoreWeave and Meta announced an expanded long-term agreement valued at approximately $21 billion, running from 2027 through December 2032.

The deal extends a prior $14.2 billion contract signed in 2025, bringing total committed value to about $35 billion, making it one of the largest AI infrastructure agreements to date.

The structure is take-or-pay committed GPU capacity tied directly to physical infrastructure delivery, not elastic cloud usage. Meta pays for guaranteed compute access regardless of actual usage levels, locking in supply ahead of demand.

Meta cannot reduce its commitment even if internal data centers come online early, while CoreWeave cannot delay delivery without penalties. Flexibility is exchanged for certainty, with Meta securing early access to high-performance compute. The financing layer reinforces this, with CoreWeave’s $8.5 billion facility backed by $19.2 billion of Meta-linked contracts and effectively underwritten by Meta’s demand.

The Physical Commitments Behind the Agreement

Three structural realities determine where this capital lands and why internal development cannot substitute for it on the required timeline.

The inference workload shift is the first. The April 2026 agreement targets AI inference at scale, not model training. Training is episodic, centralized, and burst-intensive. Inference, serving billions of users across Facebook, Instagram, and WhatsApp, is continuous, distributed, and latency-constrained in ways a centralized campus cannot solve. CoreWeave’s multi-location architecture is built for this. Meta Platforms, Inc.’s internal data center program, optimized around training economics, is not.

The NVIDIA Vera Rubin platform is the second driver. CoreWeave, backed by a $2B NVIDIA investment and multi-generational silicon access, is among the first to deploy it in H2 2026. It delivers 50 PFLOPS FP4 inference per GPU vs. Blackwell’s 10, with 22 TB/s bandwidth (2.75x higher) and ~35x more inference per megawatt. With 190–230 kW liquid-cooled racks, it is purpose-built infrastructure retrofitted assets cannot compete. Meta’s contract converts early access into large-scale inference efficiency.

The US power grid is the third constraint. Meta’s $115–135B 2026 capex is limited by transmission queues and permitting delays that capital cannot accelerate. Northern Virginia and Silicon Valley are effectively grid-constrained. CoreWeave’s 32 facilities target 1.7 GW by 2026 and 5.0 GW by 2030, with land and power secured ahead of demand repricing. Meta cannot replicate that footprint on a 2026–2027 timeline.

The Risks Hidden Inside the Revenue Visibility

The CoreWeave–Meta deal sets a qualification bar most operators cannot meet. It requires NVIDIA silicon priority across multiple generations, multi-site US deployment at gigawatt scale, and a balance sheet capable of absorbing $30–35 billion in annual capex. Each dollar of revenue effectively requires ~$2.60 in capital investment.

The real constraint is not capital alone, but the sequencing of hardware access, operational execution, and contracted backlog needed to support investment-grade financing. Competing operators are facing a specification barrier, not a pricing market.

For investors, the key question is not CoreWeave’s ability to execute, but what Meta’s internal buildout means in years three to four of a six-year contract. Meta’s $115–135 billion 2026 capex will bring significant capacity online from 2027–2029, creating a structural inflection for third-party demand. While take-or-pay protects revenue through 2032, it does not protect renewals, and renegotiation will depend on how much internal capacity replaces external supply.

For public markets, concentration risk is the underpriced signal. Nine of CoreWeave’s top ten AI customers run frontier workloads on its platform, with no single customer above 35% of its $66.8 billion backlog. Microsoft’s share has been diluted by Meta, OpenAI, and Anthropic, but systemic exposure remains: disruption would simultaneously hit multiple leading AI models. The market is pricing visibility, not concentration risk.

Where Capital Moves Next

The US AI data center market is dividing along a single axis: infrastructure built from inception for the Vera Rubin era and infrastructure that is not. The distinction is not scale. It is specification.

Liquid cooling at 190-230 kW rack density, 800V DC power architecture, and land with transmission access capable of absorbing gigawatt-scale AI factory loads are the baseline. Assets that do not meet this standard will not qualify for the demand class CoreWeave currently holds regardless of location, brand, or repositioning capital applied after the fact.

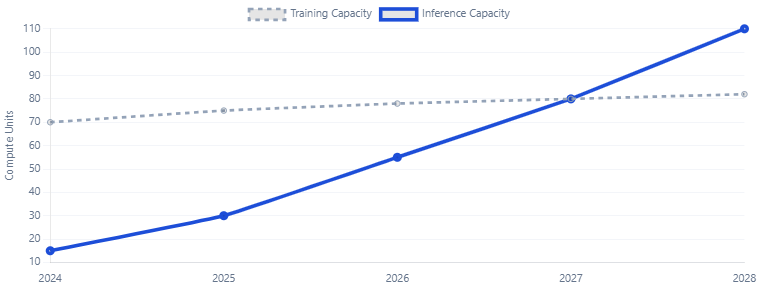

Inference is the structural driver. By 2027, it is expected to match training workloads, and by 2030, surpass them. This shift favors distributed, GPU-dense, liquid-cooled infrastructure as a distinct asset class. Markets with available power and permitting Ohio, Texas, the Carolinas, and the Midwest remain the primary deployable zones as Tier-1 regions reprice.

Meta’s $21 billion commitment signals a broader reality: even the largest hyperscalers cannot build internal capacity fast enough. The gap between internal delivery speed and external demand is now the core investable opportunity. Early movers in viable US power markets are locking in cost advantages that late entrants will have to price against CoreWeave’s $66.8 billion backlog.