The Neocloud Is Not Overflow. It Is the Third Pillar of AI Infrastructure

CoreWeave $66.8B backlog, FluidStack-Anthropic $50B offtake, Firmus Project Southgate, hyperscaler co-opetition, neocloud capital structure risk, sovereign compute

Welcome to Global Data Center Hub. Join investors, operators, and innovators reading to stay ahead of the latest trends in the data center sector in developed and emerging markets globally.

The Overflow Assumption Is Wrong

The neocloud is not transitional but a permanent tier in global AI infrastructure, and capital has not yet repriced it. Overflow-based allocations will face correction once backlog economics are fully underwritten.

The market still treats CoreWeave, Firmus, and FluidStack as tactical hyperscaler relief, but that framing breaks against a $66.8B backlog, a $50B offtake agreement, and Nvidia equity on a sovereign-aligned APAC operator.

These are not overflow signals. They are the capital structures of a durable, institutionally backed compute layer. The hyperscalers cannot replicate it on any competitive timeline.

The immediate trigger is CoreWeave’s April 2026 expansion with Meta. Twenty-one billion dollars added to an existing $14.2 billion commitment brings Meta’s total CoreWeave exposure to $35.2 billion through 2032.

Read it alongside Anthropic’s $50B commitment to FluidStack and Firmus’s $505M raise at a $5.5B valuation with Nvidia on the cap table, and the pattern is clear.

Institutional capital arrived before the classification debate resolved.

The Misalignment That Created the Neocloud

The neocloud category emerged from a structural misalignment that became clear in 2022. Frontier AI training exposed a gap hyperscale architecture could not close fast enough. AWS, Azure, and Google Cloud were optimized for elastic, multi-tenant, CPU-centric workloads.

Large-scale AI training required the opposite: fixed, high-density GPU clusters, bare-metal access, InfiniBand networking, and minimal virtualization overhead. CoreWeave, then a crypto mining operator, was the first to productize this architecture at scale.

By 2024, it reported $1.92B in revenue on 737% growth. The category had formed.

The question was whether it would persist or be absorbed. The April 2026 data resolves that question.

Three Data Points. None Are Projections.

Three data points define the current structural position. None are modeled projections.

CoreWeave holds a $66.8B backlog against $30–35B 2026 capex guidance, signaling pre-sold infrastructure rather than speculative buildout. It ended 2025 with 850MW across 43 data centers and 3.1GW under contract, with nine of the top ten AI model providers as customers. 2026 revenue guidance of $12–13B implies 134%–153% growth on an already scaled base.

FluidStack’s $50B Anthropic deal is the largest neocloud offtake to date. Google has also provided $3.2B in backstops on TeraWulf contracts and $1.3B on the Abernathy JV, effectively underwriting a direct competitor while also competing in the same GPU rental market.

Firmus raised $505M in April 2026 with Nvidia participation. Its Project Southgate targets 1.6GW across Australia and Tasmania on Vera Rubin architecture, alongside a hyperscaler deal worth over $600M annually.

This reflects APAC sovereign compute positioning as the core capital logic, not a secondary outcome.

Capital Cannot Buy Back Time

The dominant narrative frames the neocloud dynamic as a binary: hyperscalers will either acquire these operators or compete them into irrelevance. That framing misses the capital reality. The decisive input at stake is not GPU access. Any hyperscaler can purchase Nvidia hardware directly.

The constraint is the combination of secured power at scale, priority hardware allocations locked before the current generation, and signed offtake agreements that service debt before the next GPU generation arrives.

Grid connection wait times in most developed markets run seven years. CoreWeave, Firmus, and FluidStack have those positions locked. Acquiring into that position is not buying a competitor. It is buying the one input that capital alone cannot produce on a compressed timeline: time.

The Constraints the Models Don’t Capture

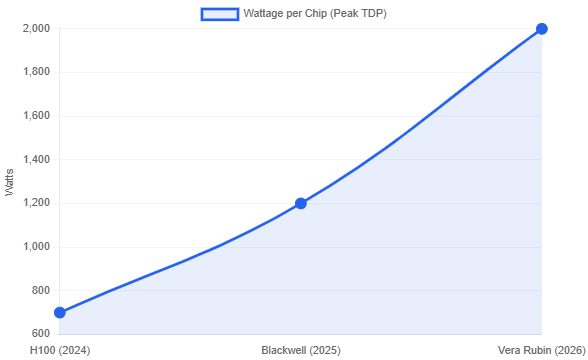

Independent operators evaluating neocloud partnerships face a qualification problem that is architectural, not commercial. Vera Rubin, Nvidia’s 2026 GPU platform, runs at 2,000W per chip and requires 48V power delivery, liquid cooling, and HBM4 bandwidth at 13 TB/s.

Operators without infrastructure designed years earlier cannot participate. The constraint is not capital, but past infrastructure decisions made before demand was visible.

Private equity faces a depreciation problem: GPUs are amortized over six years, but become obsolete in three to four. H100, Blackwell, and Vera Rubin cycles compress value faster than standard models assume.

The thesis shifts to treating backlog as infrastructure cash flow, not AI cycle risk. CoreWeave’s $66.8B backlog and FluidStack’s $50B offtake support this, with debt repayment dependent on utilization above 80%.

Public equity investors must now model hyperscaler dependency on neocloud balance sheets. Microsoft, Meta, and Google’s commitments signal a transfer of capex risk to these operators.

The internal build model is under strain from $600–700B in hyperscaler capex, alongside neoclouds operating at high leverage but structurally locked into demand.

The Equilibrium Is Already Forming

The neocloud sector will consolidate, but not mainly through hyperscaler acquisition. The durable equilibrium is stratified co-opetition: hyperscalers act as anchor tenants on neocloud balance sheets, neoclouds deploy Nvidia’s most advanced silicon first, and sovereign governments stabilize demand in non-U.S. markets.

Firmus’s APAC positioning is the clearest current model for that third layer. Nvidia equity, sovereign energy alignment, and a hyperscaler offtake agreement structured before the regional demand signal peaked.

Operators and investors who establish neocloud infrastructure positions before Vera Rubin achieves volume deployment lock in at pre-scarcity power rates and pre-shortage GPU allocations.

Those who wait for further maturity will pay 2028 prices for decisions that belong in 2026. The hyperscalers already moved. CoreWeave’s $66.8 billion backlog is the confirmation.

Interesting take—I’ve been looking at this from a similar angle, especially around performance per watt and how efficiency is starting to matter more than raw speed. I recently wrote a breakdown comparing AMD and Intel if you’re interested.