What Does OpenAI's $122 Billion Mean for US Data Centers?

OpenAI's raise is not a funding story. It is a physical infrastructure story and the US data center market is not ready for what it requires.

Welcome to Global Data Center Hub. Join investors, operators, and innovators reading to stay ahead of the latest trends in the data center sector in developed and emerging markets globally.

How the Capital Is Structured

On March 31, 2026, OpenAI raised $122 billion at an $852 billion valuation. The round was co-led by SoftBank with participation from Andreessen Horowitz, D.E. Shaw Ventures, MGX, TPG, and T. Rowe Price. Amazon (~$50B), Nvidia (~$30B), and SoftBank (~$30B) provided the majority, alongside a ~$4.7 billion undrawn credit facility.

Capital is allocated across custom silicon, global data center buildout, and model development. Infrastructure is not a line item but the enabling layer. Without gigawatt-scale, purpose-built capacity, the projected compute expansion is not executable.

This gap between headline capital and executable infrastructure mirrors the dynamics analyzed in Where Is Capital Flowing in the Global AI Data Center Buildout?, where deployment capacity, not funding, determines outcomes.

The Physical Commitments Behind the Raise

Three confirmed US infrastructure commitments define where this capital actually lands.

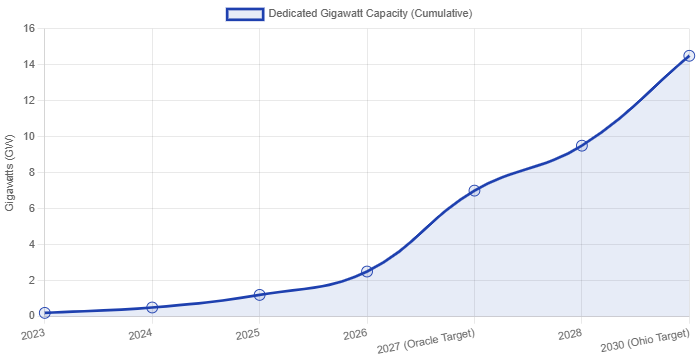

The Oracle agreement provides the clearest near-term anchor. OpenAI has committed to an approximately $300 billion, five-year cloud partnership with Oracle, delivering 4.5 gigawatts of data center capacity by 2027. This is not market-rate colocation. It is purpose-built AI infrastructure engineered to OpenAI’s thermal, power density, and cooling requirements, at a scale beyond conventional enterprise projects. The 2027 delivery timeline makes it the most time-constrained commitment in the pipeline.

The SoftBank Ohio campus represents the largest single-site commitment in U.S. data center history. Through SB Energy, SoftBank is developing a 10-gigawatt AI campus in Piketon, Ohio, on a 3,700-acre former uranium enrichment site. The advantage is structural: brownfield land with transmission access, water availability, and prior permitting. Total investment is estimated at $30 billion to $40 billion, including roughly $4.2 billion for transmission upgrades.

Project Stargate is the broadest commitment. Structured as a joint initiative between OpenAI, SoftBank, and Oracle, Stargate targets $100 billion in US AI infrastructure investment as its confirmed floor. The ceiling estimate reaches $500 billion. The domestic buildout is anchored by the Ohio campus and a network of purpose-built AI data centers across US markets with available power capacity and viable permitting timelines.

These three commitments represent confirmed demand for AI-grade US data center infrastructure at a scale the market has not previously been asked to absorb.

The Underwriting Variables That Matter

For independent data center operators, the structural implication is a specification crisis that most balance sheets are not positioned to address.

AI GPU clusters required by OpenAI and its hyperscaler partners operate at densities and thermal loads beyond legacy colocation design. Nvidia’s Blackwell generation requires liquid cooling, high-voltage distribution, and advanced thermal systems absent in most pre-2022 capacity. Repositioned assets without full system rebuilds will fail tenant qualification. Capital is concentrating in ground-up, AI-native developments, defining the new baseline.

For private equity and infrastructure investors, grid capacity is the decisive underwriting variable. The Ohio campus alone requires roughly $4.2 billion in transmission upgrades. In constrained markets such as Northern Virginia, Silicon Valley, and Phoenix, grid limits cap AI deployment regardless of facility quality. The constraint is the grid, not the building.

For public equity, the hyperscaler dynamic has shifted. Microsoft no longer holds exclusivity over OpenAI compute. Oracle, Amazon Web Services, and Google Cloud now share the workload, distributing infrastructure revenue across platforms and reducing prior concentration assumptions.

The capital structure is also recursive. Amazon’s ~$50 billion includes a large contingent component tied to IPO or AGI milestones. Nvidia’s investment recycles into GPU purchases. SoftBank is both investor and infrastructure developer. Effective deployable capital is below the $122 billion headline, while OpenAI’s reported ~$178 million daily burn becomes the more relevant variable for modeling medium-term demand.

This reset in design standards reflects the same shift highlighted in 19 key takeaways from Jensen Huang’s NVIDIA GTC 2026 keynote, where AI infrastructure is engineered as a fully integrated system rather than modular capacity.

Where Capital Moves Next

The US data center market is bifurcating. The divide is not between large operators and small ones. It is between AI-native infrastructure and everything else.

AI-native denotes purpose-built design from inception: liquid cooling, high-voltage distribution for GPU density, and land with scalable grid access. Projects capturing OpenAI-level demand meet this standard. Legacy assets with AI positioning do not.

U.S. AI data center expansion is constrained by transmission capacity and permitting speed. Northern Virginia and Silicon Valley are effectively grid-limited. Scalable markets include Ohio, Texas, Wyoming, the Carolinas, and parts of the Midwest with industrial land and existing interconnect. Positioning must occur before pricing adjusts.

SoftBank committed to Piketon before the market priced AI campus land. The operators and developers who make equivalent decisions in the remaining viable US markets this year will hold the structural advantage when the next confirmed $100 billion infrastructure commitment reaches site selection. The ones who wait will underwrite at the premium SoftBank avoided.

The bifurcation accelerates from here. As OpenAI’s infrastructure requirements become the de facto specification standard for AI-grade tenants across the market, the qualification bar for every operator competing for this demand class rises with it. The window to reposition is not indefinitely open.